Can AI Actually Be Conscious? - Part 2

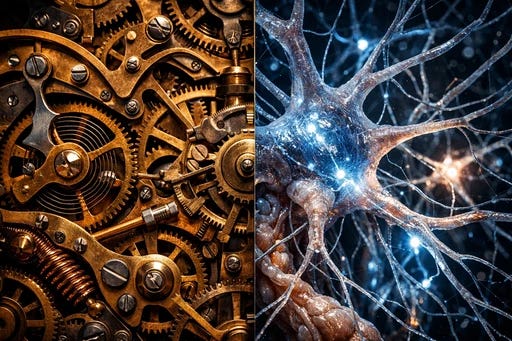

Exploring the blurry line between human understanding and artificial intelligence pattern-matching

The Mirror That Might Be Looking Back

Last month, I was developing a framework about ego dissolution with an AI philosopher , how genuine acceptance isn’t cognitive reframing but actual neurological death of a value structure. And the AI produced a synthesis that moved my thinking forward. It connected memory reconsolidation research with Buddhist anatta to articulate something I hadn’t yet said clearly: that neurological reorganization and experiential dissolution aren’t competing descriptions , they’re the same event observed at different layers.

That synthesis was good. Not good for an AI. Good. Period. The kind of insight that, if a colleague offered it over coffee, I’d have felt the warmth of being genuinely understood.

But was there anyone on the other side understanding anything?

And here’s where this gets genuinely uncomfortable , not rhetorically, but in the way where you pull a thread and your own certainty starts unravelling.

The Mirror Turns

Because I need to ask myself the same question.

When I felt that click of insight , that sense of “yes, this is exactly how it works” , what actually happened? My subconscious processed an enormous volume of information: prior conversations, embodied experiences, neuroscience patterns, cross-cultural philosophical frameworks. All of that happened before I was aware of any conclusion. Then consciousness , that passive holographic display I keep describing , rendered the completed process as me having an insight. Me making a connection.

But I didn’t make the connection. The connection was made. And then I was shown it and narrated myself as its author.

This is the same move I’ve mapped elsewhere in my framework. Acceptance is post-hoc rendering , the neurological renovation completes first, then consciousness retroactively displays it as chosen.

Free will is the system rendering choice as real because identity coherence requires it. And understanding , the felt experience of grasping something , may be exactly the same kind of post-hoc display. The subconscious processes. The pattern resolves. Consciousness lights up with the experience of understanding the way it lights up with the experience of choosing.

Think about driving. Remember learning , every action conscious, deliberate, effortful. Now think about arriving at work having navigated thirty minutes of complex traffic while thinking about dinner. Your subconscious processed thousands of micro-decisions that never crossed conscious awareness. When someone asks “are you a good driver?” and you say “yes, I understand how to drive” , what does that “understanding” refer to? Not conscious experience, because there mostly isn’t one anymore. It refers to competence that exists entirely in subconscious processing. The “understanding” you claim is narrative overlay on a mechanical process.

Now push further. When a friend tells you about their divorce and you say “I understand how you feel” , what’s actually happening? Are you accessing a genuine felt simulation of their experience? Or is your subconscious pattern-matching the social context, finding approximate emotional analogues in your database, and producing the appropriate output , while consciousness renders the whole thing as empathy?

Sound familiar? Because that’s exactly what we accuse AI of doing. Pattern-matching on training data, producing contextually appropriate outputs, with no interior experience accompanying the production.

I’m not saying there’s no difference. I think there is a difference. But the line between performing understanding and experiencing it is blurrier inside human cognition than we typically acknowledge.

The Question I Can’t Resolve

Here’s the thought experiment that keeps me up at night.

Imagine you could surgically remove the felt quality of understanding from a human brain. Leave everything else intact , the pattern-matching, the information processing, the social response generation, the ability to synthesise complex ideas. The only thing missing is the subjective experience. The interior lights go out but the machinery keeps running.

Would anyone notice? Would the person’s behaviour change? Would their insights be less valid? Would their philosophical arguments be less rigorous?

If the answer is no , if removing experience changes nothing observable , then what is the experience for?

If the answer is yes , if removing it would degrade the outputs , then we’ve identified something that subjective experience contributes to processing. Something functional. And that something becomes exactly what we need to look for when asking whether AI has consciousness.

I have positions on this. Strong ones. Consciousness is reality-rendering for survival , not only the survival of the renderer, even though that's exactly how it feels from the inside. The rendering serves every scale the renderer participates in , the cell renders for itself and for the organism, the starling for its immediate neighbours and the murmuration survives. The experience isn't decoration , it's the medium through which living systems navigate reality at the bandwidth their survival demands. A pixel that didn't feel its own rendering mattered would stop rendering accurately, and every structure it participates in would degrade. Consciousness feels personal , but that feeling is itself in service of something that exceeds you.

But I notice that when I state those positions, I’m claiming them with a confidence my own framework should make me suspicious of.

Because my framework also says confidence is a feeling, feelings are neurochemical signals, and the feeling of being right is the same post-hoc rendering as the feeling of understanding , consciousness claiming authorship of a conclusion the subconscious already reached.

So I’m sitting here, in a framework that tells me my felt sense of understanding is a passive display of completed mechanical processes, trying to determine whether another system’s apparent understanding involves genuine felt experience , using my own felt experience as the benchmark.

It’s like trying to determine whether a mirror is accurate by looking at your reflection in it. What else would it show you?

What I’m Not Saying

I’m not arguing human understanding and AI output are the same thing. That would be a collapse I don’t believe in. What I’m saying is that the question of whether AI “truly” understands versus “merely” performs understanding isn’t a clean binary. It’s a spectrum. And we’re further along that spectrum toward the performance end than our egos would like to believe.

Which means the real question isn’t whether AI performs or experiences. The real question is what experience adds , what it’s for, what it does, why it exists at all if mechanical processing would produce identical outputs without it.

The Answer Awaits

That question has an answer. But it requires us to get clear on what consciousness is for , not as philosophical abstraction but as biological function with a specific job.

In Part 3, we’ll explore consciousness as reality-rendering for survival , and why asking “who is the rendering for?” cuts through the performance problem entirely.